Material Appearance Modeling and Rendering

We predict the appearance of materials from their physical microstructure

The appearance of a material is largely determined by the microstructure of its surface and the particles beneath its surface. We construct mathematical models for predicting material appearance based on surface properties and particle content. We also investigate the challenges of fast and accurate rendering of material appearance. The goal is to interactively compute realistic images of real-world materials.

About the project

Contact

Jeppe Revall Frisvad

[Associate Professor]

Technical University of Denmark

Publications

Digitizing the appearance of 3D printing materials using a spectrophotometer [2024]

A. Pranovich, M. R. Hannemose, J. N. Jensen, D. M. Tran, H. Aanæs, S. Gooran, D. Nyström, J. R. FrisvadSensors , 24(21), 7025

NeuPreSS: compact neural precomputed subsurface scattering for distant lighting of heterogeneous translucent objects [2024]

T. TG, J. R. Frisvad, R. Ramamoorthi, H. W. JensenComputer Graphics Forum , 43(7), e15234

Neural SSS: lightweight object appearance representation [2024]

T. TG, D. M. Tran, H. W. Jensen, R. Ramamoorthi, J. R. FrisvadComputer Graphics Forum , 43(4), e15158

Digitizing translucent object appearance by validating computed optical properties [2024]

D. M. Tran, M. B. Jensen, P. Santafé-Gabarda, S. Källberg, A. Ferrero, M. R. Hannemose, J. R. FrisvadApplied Optics , 63(16), 4317-4331

Multi-Scale Radiative Transfer Simulation for the Scattering of Light by Microgeometry [2020]

V. FalsterDepartment of Applied Mathematics and Computer Science, Technical University of Denmark

Alignment of rendered images with photographs for testing appearance models [2020]

M. Hannemose, M. E. B. Doest, A. Luongo, S. K. S. Gregersen, J. Wilm, J. R. FrisvadApplied Optics , 59(31), 9786-9798

Computing the bidirectional scattering of a microstructure using scalar diffraction theory and path tracing [2020]

V. Falster, A. Jarabo, J. R. FrisvadComputer Graphics Forum (PG 2020) , 39(7), 231-242

Survey of models for acquiring the optical properties of translucent materials [2020]

J. R. Frisvad, S. A. Jensen, J. S. Madsen, A. Correia, L. Yang, S. K. S. Gregersen, Y. Meuret, P. HansenComputer Graphics Forum (EG 2020) , 39(2), 729-755

Predicting and 3D Printing Material Appearance [2019]

A. LuongoDepartment of Applied Mathematics and Computer Science, Technical University of Denmark

Towards Interactive Photorealistic Rendering [2018]

A. Dal_CorsoDepartment of Applied Mathematics and Computer Science, Technical University of Denmark

Modeling the anisotropic reflectance of a surface with microstructure engineered to obtain visible contrast after rotation [2017]

A. Luongo, V. Falster, M. B. Doest, D. Li, F. Regi, Y. Zhang, G. Tosello, J. B. Nielsen, H. Aanæs, J. R. FrisvadProceedings of International Conference on Computer Vision Workshop (ICCVW 2017) , IEEE, 159-165

Scene reassembly after multimodal digitization and pipeline evaluation using photorealistic rendering [2017]

J. D. Stets, A. Dal_Corso, J. B. Nielsen, R. A. Lyngby, S. H. N. Jensen, J. Wilm, M. B. Doest, C. Gundlach, E. R. Eiriksson, K. Conradsen, A. B. Dahl, J. A. Bærentzen, J. R. Frisvad, H. AanæsApplied Optics , 56(27), 7679-7690

Interactive appearance prediction for cloudy beverages [2016]

A. Dal_Corso, J. R. Frisvad, T. K. Kjeldsen, J. A. BærentzenWorkshop on Material Appearance Modeling (MAM 2016) , The Eurographics Association, 1-4

Hybrid fur rendering: combining volumetric fur with explicit hair strands [2016]

T. G. Andersen, V. Falster, J. R. Frisvad, N. J. ChristensenThe Visual Computer , 32(6), 739-749

Interactive directional subsurface scattering and transport of emergent light [2016]

A. Dal_Corso, J. R. Frisvad, J. Mosegaard, J. A. BærentzenThe Visual Computer , 33(3), 371-383

Quality assurance based on descriptive and parsimonious appearance models [2015]

J. B. Nielsen, E. R. Eiriksson, R. L. Kristensen, J. Wilm, J. R. Frisvad, K. Conradsen, H. AanæsWorkshop on Material Appearance Modeling , The Eurographics Association, 21-24

Alignment of rendered images with photographs for testing appearance models

Abstract

We propose a method for direct comparison of rendered images with a corresponding photograph in order to analyze the optical properties of physical objects and test the appropriateness of appearance models. To this end, we provide a practical method for aligning a known object and a point-like light source with the configuration observed in a photograph. Our method is based on projective transformation of object edges and silhouette matching in the image plane. To improve the similarity between rendered and photographed objects, we introduce models for spatially varying roughness and a model where the distribution of light transmitted by a rough surface influences direction-dependent subsurface scattering. Our goal is to support development toward progressive refinement of appearance models through quantitative validation.

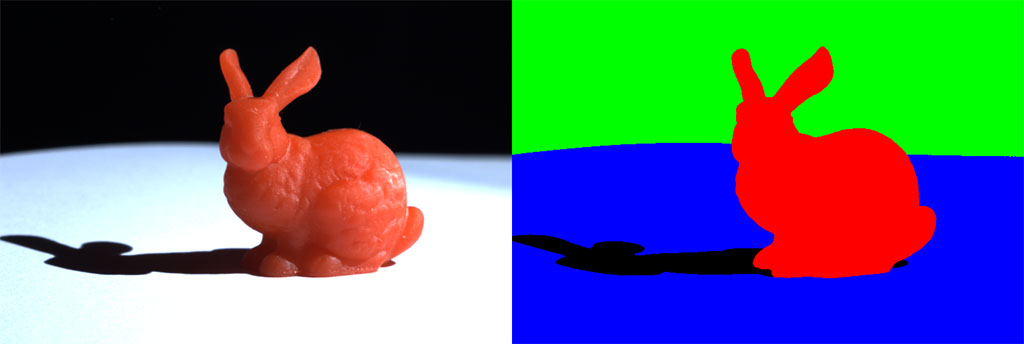

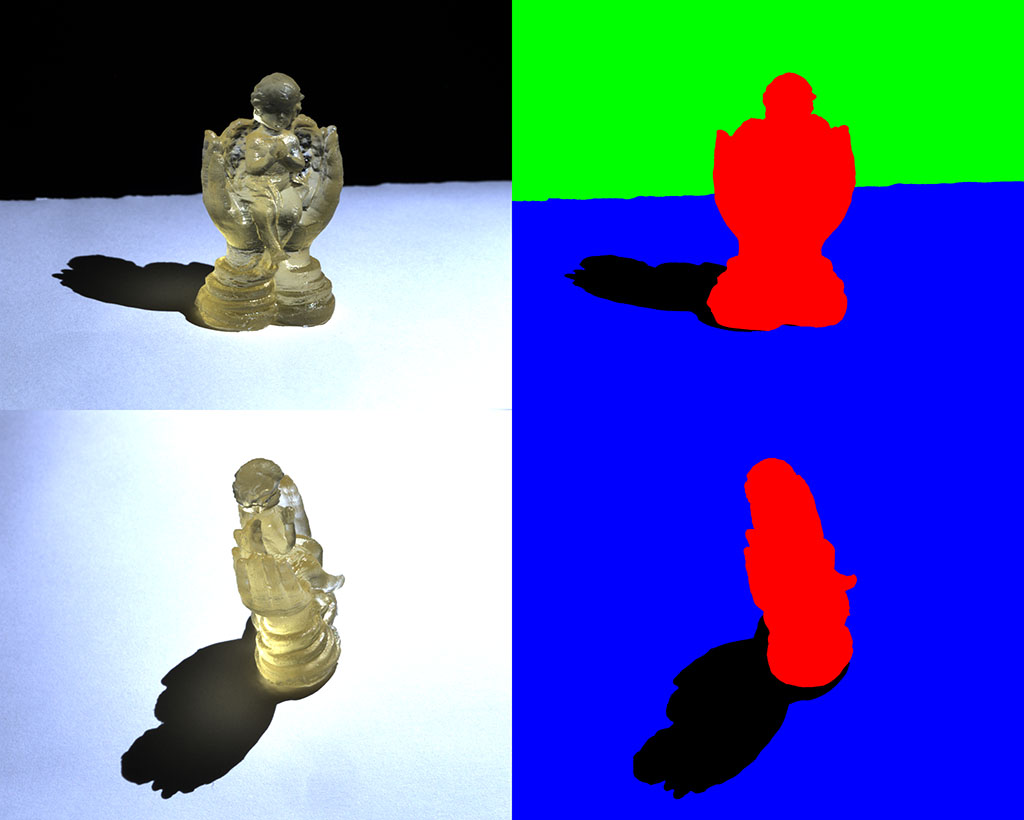

Dataset

The dataset can be used to evaluate rendering techniques or for inverse rendering. It consists of three different objects, from multiple different viewpoints with different light positions. Each image has a segmentation and the estimated camera pose and light source position are stored in a json file. This is accompanied by a triangle mesh of the object. Please cite if you use the data.

The image of the bunny along with the segmentation.

Images of the angel, shown with segmentations. Two camera poses and one light source position.

Images of the bust taken from four different camera positions, each with five different light source positions. Segmentations are also available.

BibTex Reference

@article{hannemose2020alignment,

title = {Alignment of rendered images with photographs for testing appearance models},

author = {Morten Hannemose and Mads Emil Brix Doest and Andrea Luongo and S{\o}ren Kimmer Schou Gregersen and Jakob Wilm and Jeppe Revall Frisvad},

journal = {Applied Optics},

year = {2020},

month = {October},

url = {https://people.compute.dtu.dk/jerf/papers/alignment_lowres.pdf},

}